Best AI Agent Stack (2026)

The AI agent stack is the fastest-growing architecture in 2026. It combines a large language model (LLM) for reasoning, an agent framework for orchestration and tool use, and a vector database for retrieval-augmented generation (RAG). This stack powers chatbots, copilots, autonomous agents, and any application that needs LLM-driven intelligence.

Who is this for?

- ✓Teams building RAG-powered chatbots or search

- ✓Companies adding AI copilot features to existing products

- ✓Developers building autonomous agents with tool use

- ✓Anyone evaluating LangChain vs Haystack vs CrewAI

How it works

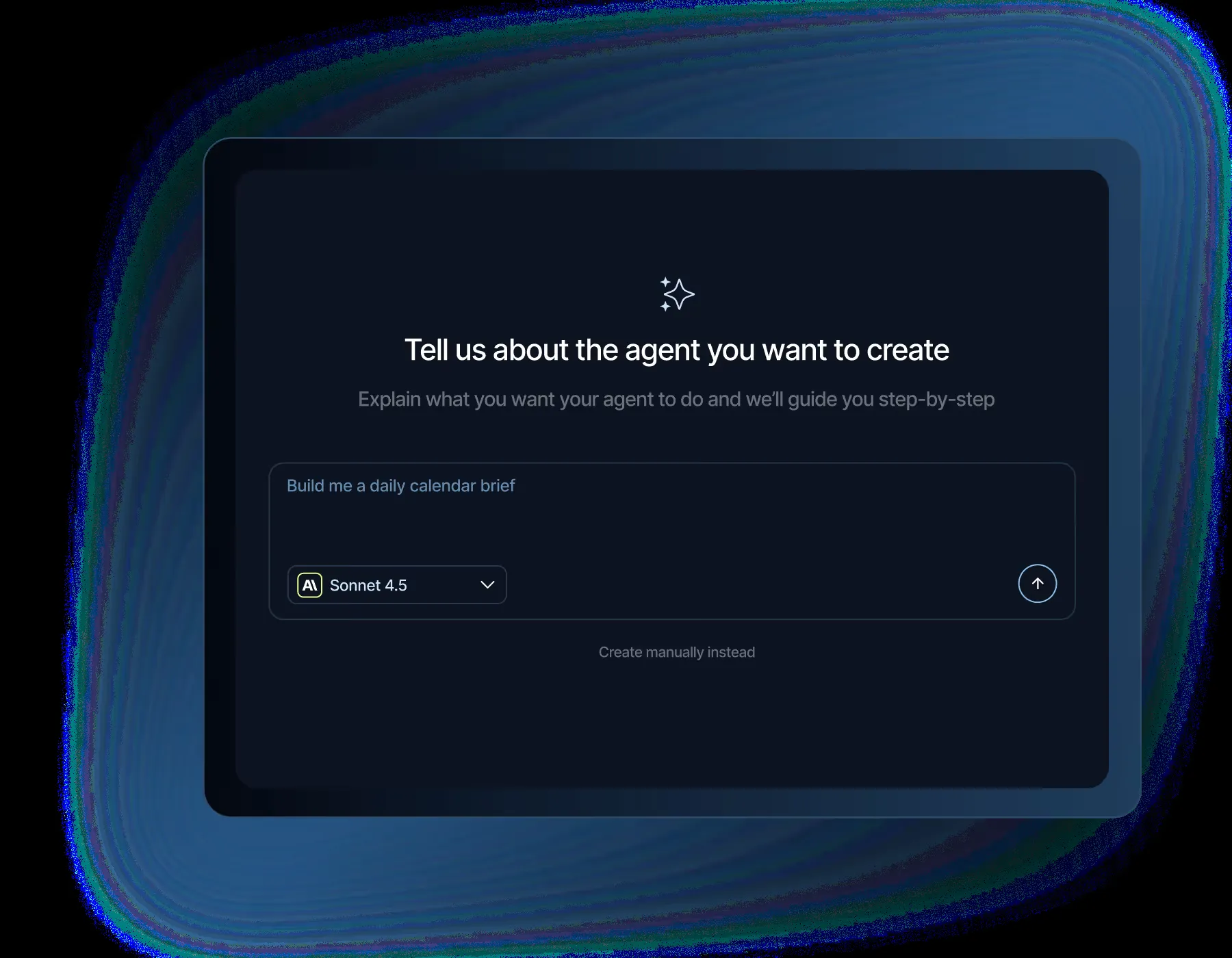

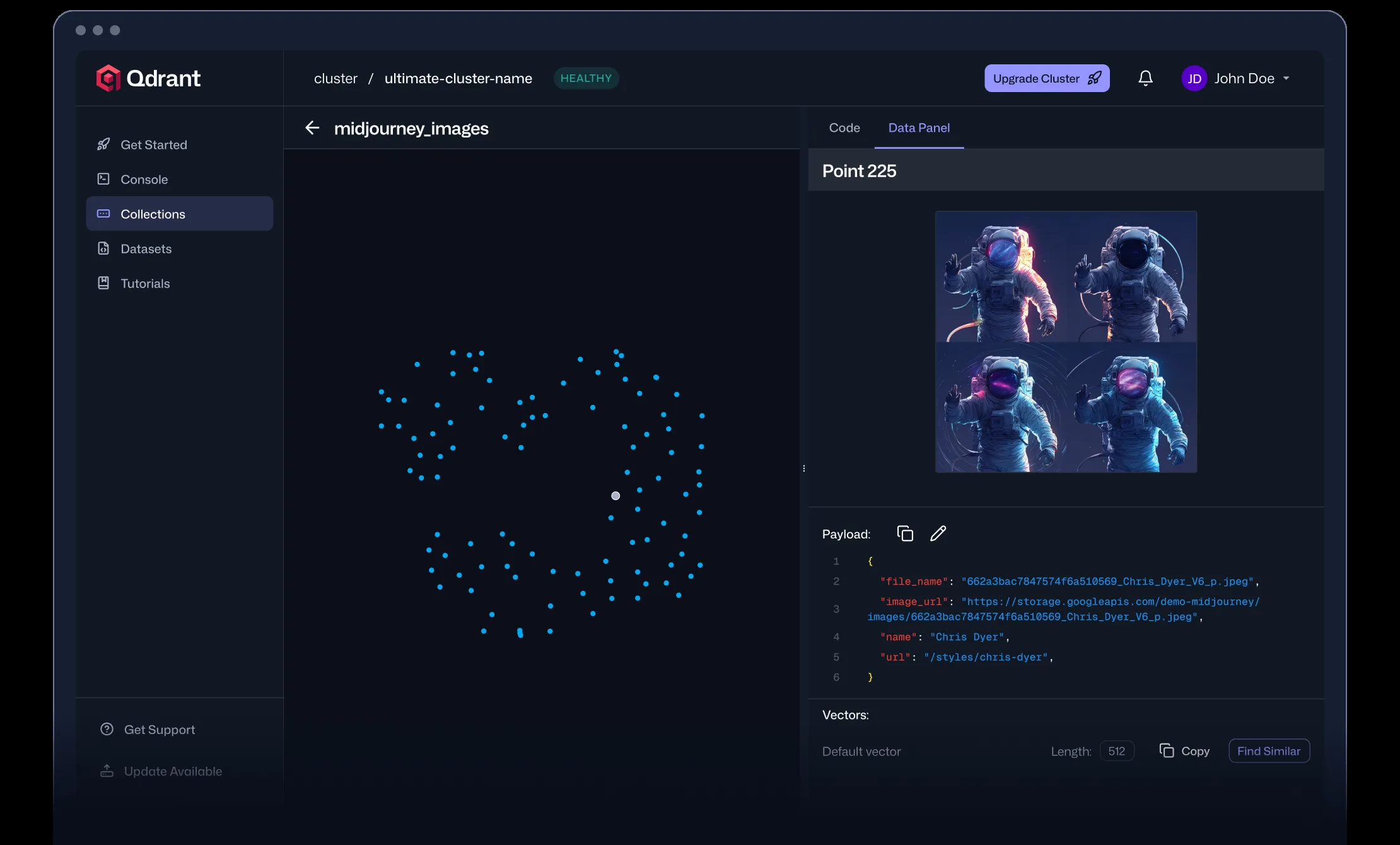

An LLM provider (OpenAI, Anthropic) handles reasoning and text generation. An agent framework (LangChain, Haystack) orchestrates the LLM with tools, memory, and retrieval. A vector database (Pinecone, Qdrant) stores embeddings for semantic search and RAG. The framework queries the vector DB, augments the prompt with relevant context, and sends it to the LLM.

Default recommendation based on community adoption metrics

Recommended tools

LLM Provider

We believe our research will eventually lead to artificial general intelligence, a system that can solve human-level problems. Building safe and beneficial AGI is our mission.

OpenAI: 2,880 SO questions.

Agent Framework

Vector Database

How recommendations change with your constraints

The same architecture adapts to your cloud, budget, and deployment preferences. Here's what our algorithm recommends for common scenarios:

Production RAG

Default stack for production RAG applications — proven tools with the largest ecosystems.

Open Source Only

Fully open-source agent stack for teams that need to self-host everything.

Frequently asked questions

Do I need a vector database?▾

For RAG applications, yes. The vector DB stores document embeddings so the LLM can retrieve relevant context. Without it, the LLM only has its training data. For simple chatbots without document retrieval, you can skip it.

LangChain vs Haystack?▾

LangChain has the larger ecosystem (135k GitHub stars) and more integrations. Haystack is more opinionated and production-focused. LangChain is better for prototyping; Haystack for production pipelines.

Which LLM provider should I use?▾

OpenAI (GPT-4) for general-purpose quality. Anthropic (Claude) for longer context and safety. Groq for speed. Open-source models via Together AI or Replicate for cost control.

Build your ai agent stack

These recommendations are generated from real community data — GitHub stars, downloads, Stack Overflow activity, and 45+ verified integrations. Customize them for your specific requirements.